Nvidia has announced a compact AI-RAN solution designed for installation at cell sites. The company released details of a solution that is designed to meet the constraints of cell site deployments on available space and power.

Nvidia has announced a compact AI-RAN solution designed for installation at cell sites. The company released details of a solution that is designed to meet the constraints of cell site deployments on available space and power.

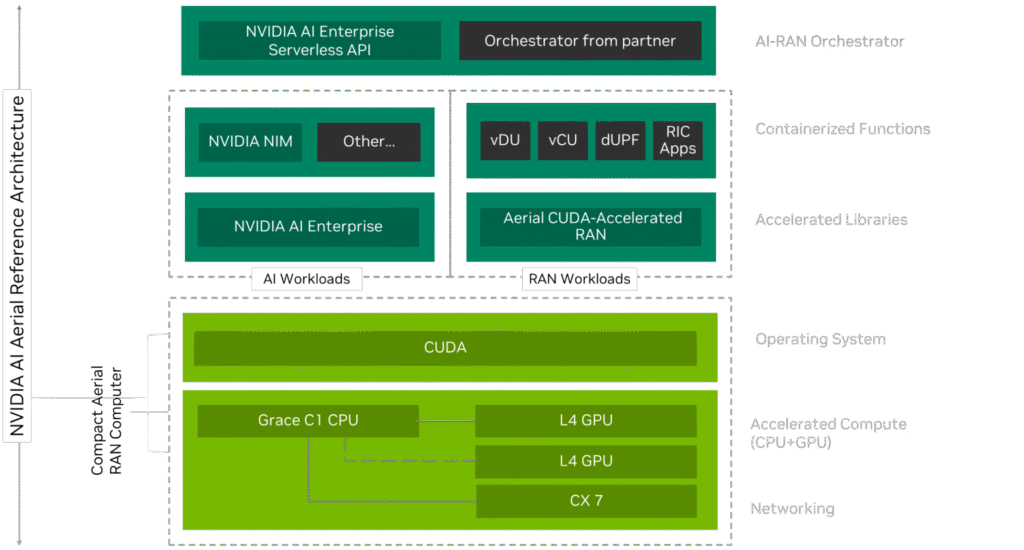

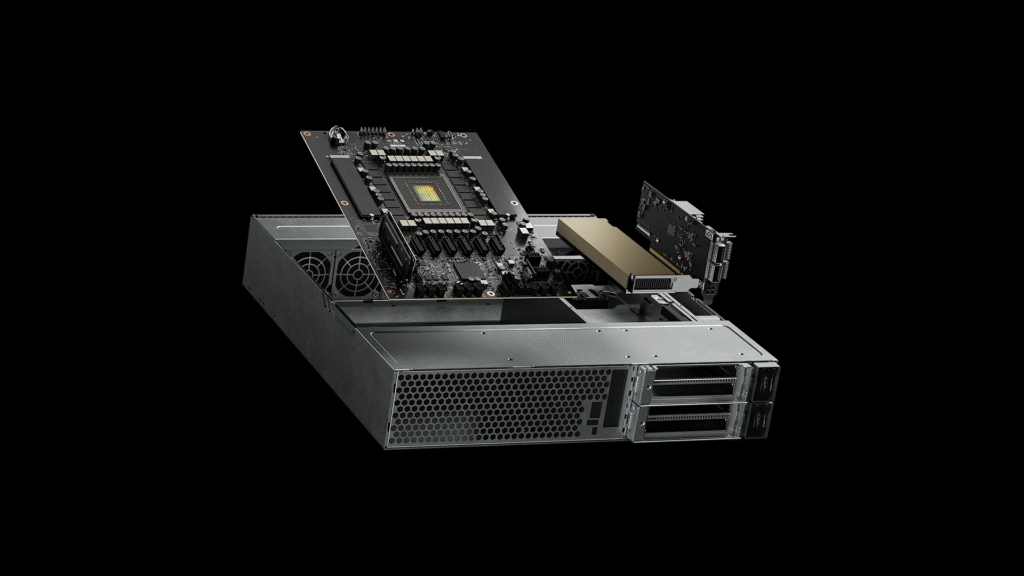

The Compact Aerial RAN Computer (ARC-Compact) is a 2RU solution that is 17 inch half depth and meets the same data rate to power ratio of “traditional baseband systems used today”. The product is based on Nvidia’s Grace CPU C1, featuring 72 Arm Neoverse V2 cores. Radio or AI functions that require acceleration are served by an NVIDIA L4 Tensor Core GPU*. Ethernet connectivity is provided with an NVIDIA ConnectX-7 network interface card (NIC).

ARC-Compact supports a mix of TDD and FDD, standard 4TR and massive 64TR MIMO. It has up to 30 sector carriers, supporting 25 Gbps system throughput and conforms to O-RAN 7-2 and 7-3 RU Splits. Nvidia said it also supports both in-line and lookaside Layer 1 functions. And it comes with embedded NVIDIA CUDA-Accelerated RAN algorithms (cuPHY, cuMAC) for spectral efficiency and performance.

Go to market partners

Nvidia said the design would be made available through multiple OEM and ODM partners including Foxconn, Lanner, Quanta Cloud Technology, and Supermicro with it is partnering on the development of Grace CPU C1–based systems.

“We expect to see various configurations to support telecom distributed AI-RAN use-cases in the market later this year,” Kanika Atri, senior director for telco marketing at NVIDIA, wrote.

It will also form the reference architecture as the D-RAN option for Nvidia’s collaboration with T-Mobile.

The post added that Nokia received the seed systems of NVIDIA ARC-Compact as part of the early access program and is testing its 5G Cloud RAN software with early benchmarking.

Nvidia added that Samsung is expanding its AI-RAN collaboration with NVIDIA to include 5G vRAN integration with NVIDIA ARC-Compact for distributed AI-RAN solutions. Samsung already completed a proof-of-concept to verify integration between its vRAN software and NVIDIA L4 GPU. Samsung is now evaluating its vRAN software with NVIDIA Grace C1, and NVIDIA L4 Tensor Core GPUs to accelerate additional AI workloads, including AI/ML algorithms, to further the performance and efficiency gains.

On a level with state-of-art Asics

During his keynote speech at Computex, Nvidia CEO Jensen Huang said, that after six years of work to refine and optimise a fully accelerated RAN stack, Nvidia’s AI-RAN is now “for data rate for per watt on a par with state-of-the-art ASICs”

“Once we can do that,” he continued, “once we can achieve that level of performance and fuinctionality, after that we can layer on top AI. Now we have the ability to introduce the idea of AI on 5G, or AI on 6G, along with AI on computing.”

Ronnie Vashista, Nvidfia’s telecom lead, said on LinkedIn, “This compact Aerial RAN Computer, which is software defined and GPU accelerated is perfect for deployment at the cell site and running RAN centric workloads.”

The technical blog reiterated what Nvidia sees as the need for AI-RAN. First, to serve within an AI fabric, and second to boost the performance of the RAN itself.

“This [an intelligent network fabric] requires embedding AI into radio signal processing for X-factor performance and efficiency gains, and accelerating cell sites to serve AI traffic, bringing AI inference as close as possible to users.”

That final point, the provision of AI inferencing at the edge, is still the great unknown of the AI-RAN vision. Economically, it will be critical to modelling the uptake of GPU-based platforms within the RAN, especially in the D-RAN.

* The L4 Tensor Core GPU PCIe plug-in card supports FP8 Tensor Core with 24 GB of GPU memory, enabling 485 teraFLOPS performance, suitable for edge AI workloads such as video search and summarisation, and vision language models, in addition to radio Layer 1 and some Layer 2 functions such as scheduling. L4 delivers 120x higher AI video performance than CPU-based solutions and a low-profile form factor operating in a 72W TDP low-power envelope.