As it anticipates an increase in AI-powered services hitting its network, and works out how best to meet those AI needs, NTT DOCOMO said it has demonstrated the operation of AI applications directly on the general-purpose CPU resources of its commercial vRAN network. It said this, “confirmed the potential of a network architecture design to address rapid traffic growth driven by the expansion of AI services while optimising network operational costs.”

DOCOMO has been exploring options for “In-Network Computing”, in which AI processing is executed within the network itself with the aims of improving user experience, optimising network traffic. The operator wants to appropriately deploy high-performance GPUs and CPUs at suitable network nodes, enabling optimal placement and effective utilisation of computing resources, including various types of processors.

It release comes as Dell releases a new edge Cloud RAN outdoor server, designed to enable very far edge vRAN and edge AI operations, as Ericsson announced last week its own intelligent radio units, and as AWS pulled back from a hardware approach of its own. As the industry still doubts the business models around edge AI, AI on RAN and edge compute in the mobile network, these operators are thinking about the best network models they can build to cost-effectively meet the potential opportunities.

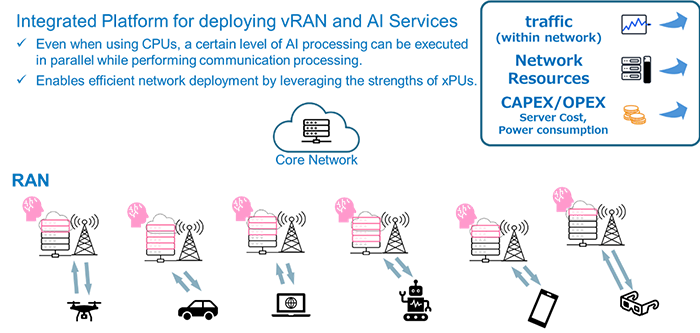

For the CPU demo, DOCOMO integrated a platform that enables vRAN functions and AI service applications to run simultaneously on CPU resources on general-purpose HPE servers. It said that the results confirmed that even when using CPUs, a “certain level” of AI processing can be executed in parallel with communication network processing. That means that flexible operation combining network and AI applications is possible without relying on dedicated high-performance accelerators, thereby expanding viable options for efficient network deployment.

How NTT DOCOMO envisages the before and after of an integrated vRAN platform, with distributed compute resources able to meet edge AI needs, as well as vRAN operations.

The demo set-up saw it use vRAN base station software from NEC. The virtualisation infrastructure hosting the vRAN and AI applications was provided by Amazon Web Services (AWS). Accelerator cards for accelerating specific computational processing came from Qualcomm Technologies. The stack ran on HPE servers.

Although DOCOMO said it had used Qualcomm’s accelerator, it didn’t state what general purpose CPU it used for the trial. And although the operator said it wants to meet both network optimisaiton and customer AI use cases, it didn’t state what AI workloads it ran within its demo.

SK Telecom’s ATHENA shows wisdom of real time RIC and complete HW/SW separation

SK Telecom’s latest 6G white paper, released this week, called for true vendor interworking of RAN components, a “complete” hardware/software separation, and re-introduced the concept of the (near( real time RIC into an AI-Native RAN architecture for 6G.

The paper, titled ATHENA*, stated that radio networks “should evolve into AI-native RAN, where the telecommunications network itself combines with AI technology.”

It said an AI-native RAN would both support RAN automation and optimisation, such as AI enhanced link adaptation and predictive AI for energy saving, as well as provide infrastructure for AI applicaitons such as low-latency inference-base services at the edge.

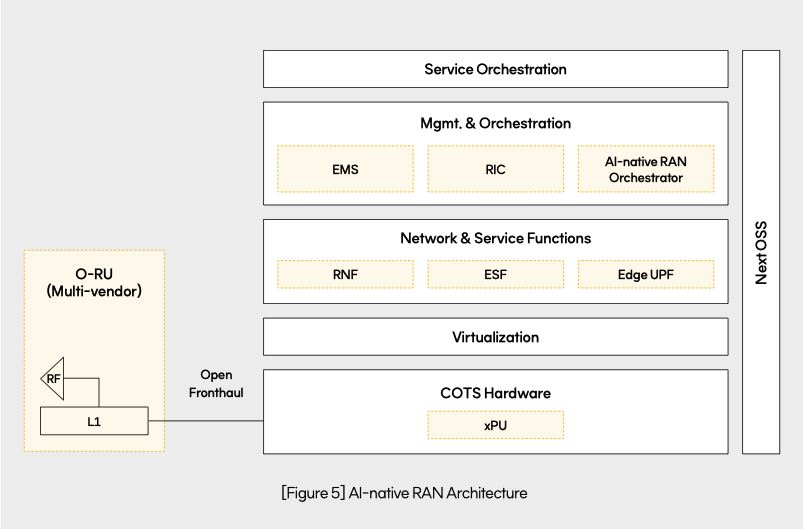

Its target architecture is one where network and service functions utilise xPU-based COTS services to provide telco and edge service functions that provide AI functions or edge services such as driving control or AR/VR.

Like NTT DOCOMO, SK Telecom’s architecture keeps open the CPU/GPU/NPU element, looking for a solution that lets it deploy the most appropriate design.

An edge UPF deployment is also necessary for efficient traffic processing at the edge. Additionally, it aims for an intelligent network that can optimise the network by collecting and analszing network and user data in real-time (RIC, RAN Intelligent Controller) and manage it efficiently through an AI-native RAN orchestrator.

This exposition of a real time RIC element is a step further than most operators and their vendor suppliers have stepped so far, where most of the emphasis has been on a centralised non real time RAN Intelligent Controller.

The paper says that this architecture (xPU-based servers plus edge UPF) would redefine base stations as intelligent nodes. This would also require the “complete decoupling” of hardware and software, enabling the flexible deployment of RAN components as needed. It also said that equipment should interwork flexibly based on open interfaces “and be managed without vendor compatibility constraints.”

The operator said it has been “actively conducting research to improve base station performance by utilising AI” since 2022. It said research is underway on various chipsets, including GPUS and virtualised resource allocation technology to derive the optimal AI-RAN architecture capable of simultaneously providing communication services and AI services. A year ago at MWC 2025 it showd RAN workload processing using GPUS through a L1 benchmark test and provision of AI services on a single hardware.

At the upcoming MWC Barcelona 2026, SKT said it will demo various AI agents for networks, AI-RAN technology that provides both communication and AI services, on-device AI-based antenna optimisation, and integrated communication and sensing technology that gathers environmental information via radio signals.

“Despite uncertainties in the 6G era, we will continue to create new growth opportunities by leading the evolution of future communication infrastructure over the next decade, prioritizing customer value and combining AI, virtualisation, openness, and Zero Trust security,” said Yu Takki, Head of Network Technology Office at SK Telecom.

* ATHENA stands for AI-native (integration of artificial intelligence throughout the network), Trust (adoption of Zero Trust security principles), Hyper-connectivity (seamless connections across diverse devices and environments), Experience (customer-centric innovation and enhanced user experience), opeN (openness through open-source technologies and collaborative ecosystems), and Agility (flexible and cloud-native network operations).