AI-native radio networks will reshape wireless architecture and design. What’s important now is to be able to test new use cases and technology efficiently and quickly, to speed innovation and industry competitiveness.

1. HOW AI-RAN DISRUPTS

As the industry looks toward 6G, artificial intelligence is no longer just an optimisation tool bolted onto the side of the network. It is becoming foundational. The evolution of a model where AI is embedded natively into the radio air interface is emerging as one of the most disruptive shifts in how wireless systems will be designed, deployed and tested.

For decades, network engineering has relied on statistical averages. Teams collected vast amounts of channel sounding data, distilled it into propagation models that represented typical urban or suburban environments, and built systems to perform well against those generalised assumptions. AI-RAN is disruptive because it changes that logic. Instead of designing for the “average” network, operators and vendors can now tune performance to real-world, site-specific conditions, even down to a specific campus or deployment. That alone can deliver two to three decibels of gain as major companies showed in field studies. Those gains may sound small, but at the edge of coverage they translate directly into better reliability, longer battery life and higher usable capacity. And optimization doesn’t stop at the radio link. Traffic patterns themselves are uneven – often 80% of traffic flows through just 20% of the infrastructure. AI-driven routing and resource allocation promise to smooth those imbalances and make better use of existing capacity.

The promise of AI embedded in the network also opens up a range of potential new use cases and opportunities for monetisation, from integrated sensing to AI-driven applications interacting between devices and networks. Still, the message from standards bodies is clear: AI must prove measurable benefit before it becomes part of the specification. AI doesn’t automatically solve anything. You need testability and clear gains. That’s why 3GPP is careful about what becomes a work item.

“Over time, workloads are expected to shift dynamically between device and network”

2. AI-RAN’S ARCHITECTURAL RETHINK

Much of the current discussion separates AI into two complementary domains. AI for RAN focuses on optimising the network itself: scheduling, routing, and radio performance. AI on RAN is about what runs on top of it: applications such as large language models, real-time translation, and edge inference services that increasingly rely on network compute. Today, much of that processing happens either entirely on the device or somewhere deep in the cloud. Over time, workloads are expected to shift dynamically between device and network depending on link quality, latency and battery life.

If you drain the battery, users panic. So the system needs to decide intelligently where the compute happens. This is driving a broader rethink of shared infrastructure, where the same edge compute resources may support both AI applications and RAN optimisation algorithms. That thinking is being done in different bodies. The AI-RAN Alliance is about developing use cases and trials, working out what are the benefits of this shared architecture. Then there will be an effort in the standards development organisations to standardise the enabling technology for those use cases.

CSI FEEDBACK: At MWC 2025, Qualcomm and Rohde & Schwarz showcased CSI feedback compression, based on the CMX500 one-box tester.

SEE MORE ON AI & ML in 6G NETWORKS

3. FROM RESEARCH TO STANDARDS – CSI

One concrete example is CSI (Channel State Information) feedback compression. Traditionally, large amounts of uplink resources are consumed simply to report channel conditions back to the network. By using AI-based compression models running jointly on device and network, that overhead can be reduced without sacrificing downlink performance.

Alternatively – with the same uplink overhead consumed by conventional methods – AI-compressed CSI can provide richer channel information, enabling better precoding and thus higher downlink capacity. Rohde & Schwarz and Qualcomm showed in 2025 that CSI feedback compression is testable and produces a significant gain. The concept has now matured and 3GPP has adopted two-sided AI models as a formal Release 20 work item, expected to conclude in the second half of 2027. That work will look at how AI can work simultaneously in the device and in the network in a standardised manner.

Standardising that approach introduces new challenges such as defining reference implementations and the exchanging of AI models between device and network, co-ordinating behavior across both ends of the link. These parts of the stack used to be completely independent. Now they actually talk to each other. That’s new, and it’s exciting. Rohde & Schwarz and Qualcomm plan to demonstrate updated baselines at Mobile World Congress.

DPoD: Nokia and Rohde & Schwarz have tested a new AI receiver, using the R&S SMW200A vector signal generator and FSWX signal and spectrum analyser. The demo will be shown at MWC 2026.

4. NEURAL RECEIVERS, DIGITAL TWINS AND DIGITAL POST-DISTORTION

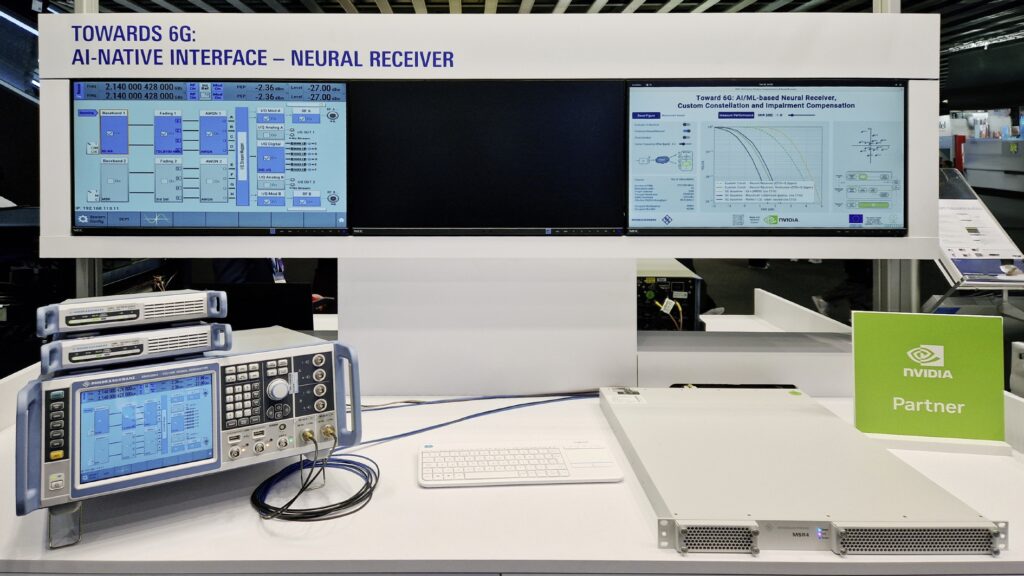

Another fast-moving area is the neural receiver, where certain parts of the classical receiver chain are replaced with a neural element. Over the last years Rohde & Schwarz and NVIDIA did ground-laying work on digital twins for training and testing neural receivers.

Working with Nokia, Rohde & Schwarz has been evaluating AI-based digital post-distortion (DPoD). While devices already use digital pre-distortion to compensate for power amplifier non-linearities, Nokia applies AI at the base station to reverse distortion after reception. The interesting opportunity is coordination: how much correction should happen on the device versus the network, pre-distortion versus post-distortion? Where do you spend the compute and energy for the best overall efficiency? That balance will likely become dynamic. To make development easier, Rohde & Schwarz has built a lab-based “digital twin” measurement setup that emulates these effects without needing a full base station. Using an open neural network exchange (ONNX) format, vendors can plug in models and benchmark them in a standardised way. A signal generator emulates the user equipment with distorted uplink signals according to a certain characterisation of the power amplifiers, and then post-distortion algorithms are running in our analyser, allowing us to benchmark these AI algorithms. The goal is not to support a single vendor, but to provide an open, scalable testing environment for the ecosystem.

Demo of AI/ML-based neural receiver with NVIDIA, seen at MWC 2024. This year, the ongoing collaboration focuses on digital twins.

SEE MORE ON AI & ML in 6G NETWORKS

5. HOW DIGITAL TWINS HELP DEVELOPMENT

Rohde & Schwarz is also extending its collaboration with NVIDIA in the area of digital twins. What began four years ago as synthetic training data generation has evolved into increasingly realistic simulations that combine 3GPP models, calibrated ray tracing, real-world fading, and now real-time hardware-in-the-loop testing.

At Mobile World Congress, the companies plan to demonstrate a live setup connecting an open-source base station stack – enhanced by an AI-enabled downlink link adaptation mechanism – connected to a commercial 5G user device. The testbed integrates the R&S SMW200A vector signal generator featuring dynamic channel emulation capabilities and the FSW signal and spectrum analyser. Jointly, these instruments enable the emulation of complex radio channels based on ray tracing that enable the verification of such AI-enhanced base station implementations under site-specific conditions. Step by step, the goal is to close the gap between simulation and reality. Understanding how the 5G signal propagates, how the base station responds, and how the AI algorithms perform in a digital twin that can be run in the lab is a huge advantage in scaling testability.

6. ISAC: WHEN AI MEETS SENSING

Beyond traditional communications, Rohde & Schwarz is also working on Integrated Sensing and Communications (ISAC). Potential use cases range from traffic monitoring and safety alerts to wide-area drone detection and even indoor vital-sign monitoring. This is not directly an AI-RAN topic, because the physics of radar detection is well understood; AI becomes essential when interpreting what the radar sees. With limited bandwidth and relatively low frequencies, raw radar resolution is coarse. AI helps classify objects by learning signatures such as movement patterns and micro-Doppler effects – for example someone moving their arms, which enables a system to distinguish between different people, or between a pedestrian and a trash bin, or detecting drones near airports. This is where AI on RAN comes in to take these raw measurements out of the network, process them, compare them with knowledge and the signature of an object or person, in order to classify. Using its radar target emulators, originally developed for automotive testing, Rohde & Schwarz can simulate objects from just a few meters away out to 20 kilometers, including micro-Doppler characteristics. These tools allow developers to train and validate AI-based sensing algorithms before real-world deployment.

These tools allow developers to train and validate AI-based sensing algorithms before real-world deployment

7. THE KEY IS ENABLING THE ECOSYSTEM

The direction of travel is clear. The AI native RAN is about improving link budgets, keeping the same cell grid, supporting smaller devices, and using AI intelligently to get more out of the network. That’s where the real gains will come from. Across all of these efforts, CSI compression, neural receivers, digital twins, and ISAC, the common theme is enablement of a faster innovation cycle. Rohde & Schwarz focuses on providing the measurement, validation and emulation platforms that allow others to innovate more quickly. As AI-RAN becomes central to 6G, that role is only growing in importance.

SEE MORE ON AI & ML in 6G NETWORKS

About the author:

Alexander Pabst, Vice President Market Segment Wireless Communications, Rohde & Schwarz. In his role as Vice President of the Market Segment Wireless Communications, Alexander Pabst is responsible for driving the strategic direction of the wireless technology product range at Rohde & Schwarz. Alexander has more than 25 years of experience in the wireless industry. After spending some years in sales, he joined Rohde & Schwarz to work in various product management and business development positions. Before his current role he was Vice President of the systems group within the test & measurement division, launching a full range of 5G over-the-air test solutions. Alexander represents global test & measurement vendors on the board of the Global Certification Forum (GCF). He holds a degree in physics from the University of Bonn.

Alexander Pabst, Vice President Market Segment Wireless Communications, Rohde & Schwarz. In his role as Vice President of the Market Segment Wireless Communications, Alexander Pabst is responsible for driving the strategic direction of the wireless technology product range at Rohde & Schwarz. Alexander has more than 25 years of experience in the wireless industry. After spending some years in sales, he joined Rohde & Schwarz to work in various product management and business development positions. Before his current role he was Vice President of the systems group within the test & measurement division, launching a full range of 5G over-the-air test solutions. Alexander represents global test & measurement vendors on the board of the Global Certification Forum (GCF). He holds a degree in physics from the University of Bonn.

- This is a sponsored post