Bruno Zerbib, Orange’s Chief Technology and Innovation Officer, is on stage at the operator’s OpenTech event, talking about a future vision for the network.

Orange’s OpenTech event is a multi-room demo fest, held at the operator’s Orange Gardens campus in southern Paris.

One room is dedicated to post-quantum cryptography, with a wall of vintage minitels used to illustrate the super-state of a Qubit – a nod to past innovation. The serious side of the room shows off the operator’s live Quantum comms service in the Paris area, as well as different post-Quantum cryptography methods.

There’s rooms hosting future network exhibits – labelled Networks for Humans. One demo features a hybrid cloud RAN from Nokia and AWS, with AI agents sited on the DU and/or at the CU location advancing dynamic network slicing capabilities. In the demo architecture, Nokia’s inline accelerator card provides the signal processing acceleration, as announced at MWC 2025. But the demo steps up the slicing capabilities, with intent-based slicing from a Nokia agent monitoring and optimising the network in conversation with an agent at the CU site.

Then there’s further AI-enabled network monitoring, including Gen-AI netops that can readily classify and configure clusters of cells in terms of how they are behaving, rather than on geographic or pre-labelled terms.

A network digital twin demo shows how the blend of physical and virtual via computer vision can enable AR-assisted technicians to update the digital twin according to the reality of the network.

Then there’s site drone inspection, where Orange currently has about 50 drone pilots in operation surveying mobile towers, and plans to get that number up to around 300. A pilot training drive is in process, using VR glasses rather than the real world to train pilots. At the moment the drones just provide visual feeds back to operators, but there are plans for computer vision and AI apps.

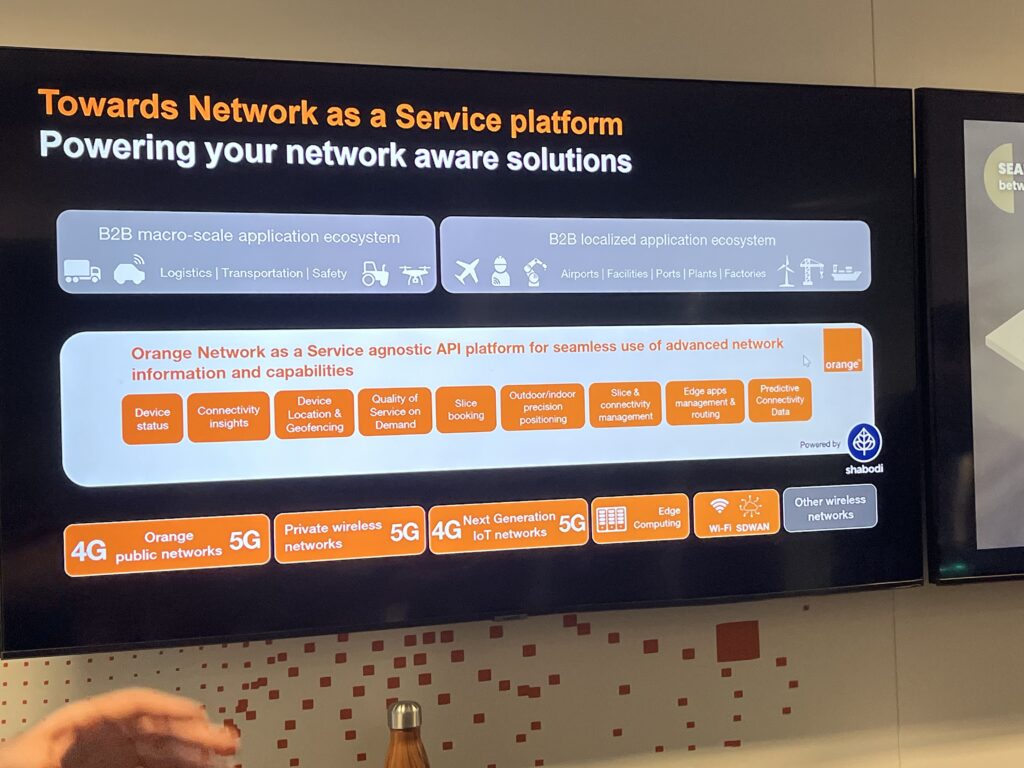

Another set of demos shows what Network APIs can do to speed up onboarding customers to an app, or to deliver enhanced connectivity to a computer vision robot when it detects a potential anomaly (yes it’s another Quality on Demand use case). This is the vision of the NaaS API platform, with network-aware applications.

Ericsson and Huawei have their own rooms, running through their AI for networks, networks for AI, agentic AI use cases. You know the drill. All this is just the networks side of things. There are further rooms dedicated to security and trust, in-home tech, empowering businesses, all of that.

The demos are all products of Orange’s own research function, and those carried out in partnership with vendors and industry projects. The aim, as with the “I for Innovation” in Zerbib’s job title, is to foreground the operator’s role in hands-on research and the real-world, problem-solving deployment of innovation.

Structuring innovation

And so back to Zerbib. He outlines the current macro-strategic issues facing a company with national-level responsibilities like Orange. LLMs are a black box, containing unknown biases – but they also offer great potential value – so how should they be leveraged and applied responsibly. Governments can strangle access to technology. Customer data can leave the country and bad actors can use AI to attack your infrastructure. The datacentres that serve massive recent AI accelerations are hugely energy hungry.

So, faced with this. How to innovate? Indeed why innovate? Innovation must bring progress, and what is progress? Progress is the improvement of the human condition. Does it improve the human condition to replace humans with AI? Does it improve the human condition to confirm societal biases via poorly trained AI? What about rampaging energy usage?

Zerbib is tasked, ultimately, with balancing all this. He itemises Orange’s position. Let’s not use AI for the sake of AI, but instead put together a group of philosophers, researchers and scientists to find an ethical way forward. Let’s not rush headlong into super-data centres and thousands of energy hogging GPUs, although let’s do look forward to nuclear fusion and the near unlimited energy it could bring. Let’s not hand over our AI futures to a few super-companies or super-power countries, but instead let’s partner with state level actors to create sovereign AI propositions.

Let’s protect our customers from the fakery and scammy AI outcomes, but also let’s enhance their lives with in-home tools and with AI-enabled services that improve lives including: for example, African-language ai agents that can open up digital opportunity on a continent where the average age is under 20, and where the aspiration and need for better lives is fierce.

AI in the network, but is that an AI-RAN?

And then there’s the network. How does the fabric of the network change as this responsible application of the AI revolution, or evolution, progresses?

For Zerbib, the move to physical AI – drones, robots – will open up a new requirement for mid-sized AI in the network. At the moment, AI flows are “north-south” between a device and a data centre, which could be in California. But this new AI will require an “east-west” capability, connecting AI from device to network location to core.

“The way we think about our network infrastructure is as a living thing: we think about it as being a platform with distributed computing capabilities. And in the world that is coming, that is developing before our eyes, we’re going to see more and more expectations to use some of that distributed intelligence. And to make that happen, you cannot have a north-south communication where you have very limited intelligence running on the device, and everything else is 1000s of miles away. You’re going to need some east-west communication as well, which means you’re going to have some distributed AI.”

Tied to this is the development of exposing connectivity, a set of services that Orange exposes through APIs. “So the way we’re thinking about our network is as a distributed computing platform that’s going to be more and more powering AI workloads in the future.”

We think from a technology perspective it looks like a viable perspective, and it looks like it’s promising. But we have to be cautious

AI-RAN or not?

So does this distributed compute infrastructure sound like the AI-RAN vision, which proposes building a RAN infrastructure where the baseband signal processing happens on general purpose processors whose capacity is also used for network-focussed AI workloads and for third party AI applications.

“I expected this question,” says Zerbib. He explains that the evolution of AI-RAN might not happen in the same timescale as the evolution of the AI compute infrastructure – that means that it might not make sense to couple the two together. The two stories are a bit “disconnected” as he later tells TMN. And yet, there could also be benefits from the AI-RAN approach.

First, though, the operator has to assess if GPU based RAN processing will actually deliver the efficiency and TCO benefits it needs.

“The first question is can I transition from ‘old radio technology’ to GPUs as a more effective way of processing signal – and is it going to allow me to do that in a way that will be more efficient, cost efficient, increase capacity? We are working with leading vendors right now to experiment on that. And if potentially this is something that could be a 20, 40 or 50% efficiency improvement – that could be a life changer. But we are not sure yet what is the extent of that impact. We think from a technology perspective it looks like a viable perspective, and it looks like it’s promising. But we have to be cautious and right now we are working on trying to get a sense of how things are going to shape up.”

Zerbib point out that making these assessments also has to take into account the underlying speed of change at the hardware layer.

“It’s not just about the algorithms, and we’re really talking about very small size, super fast LLMs. It’s also about hardware that’s going to evolve much faster. We’re talking about a yearly update cycle for GPUs, which means the peaks we’re going to make this year might change in a year or two.

“But it’s very promising, it’s really interesting and clearly we’re looking at this. And obviously experimentation and being agile is the way forward. We’re not going to do a massive transformation without understanding exactly the cost benefits equation.”

The AI-RAN use case question

This notion of having a network being a distributed computing platform becoming more prevalent, and every workload becoming an AI workload over time – this is clear. What we are obsessed with now are the use cases we need to prioritise.

Zerbib has highlighted the potential step change an efficient GPU RAN architecture could bring, but he is also assessing the potential use cases an AI-RAN could bring to the operator’s customers. That’s the flip side of the AI-RAN business model – the ability to use the infrastructure for AI workloads. One thing he is sure about is that, AI-RAN or not, the direction of travel to a distributed AI infrastructure is locked in.

“We are working with other telcos and AI leaders. We go to China, to the US, to look at emerging use cases and we are quite sure that it will happen. We don’t know yet the scale. This notion of having a network being a distributed computing platform becoming more prevalent, and every workload becoming an AI workload over time – this is clear. That’s the direction where it’s going.

“What we are obsessed with now are the use cases we need to prioritise, both on the consumer side and on the B2B side. So, yes, we think this is a very important piece of technology with, again, two different dimensions, running AI workloads, or using AI to deal more efficiently with single processing.”

After the press conference, Zerbib privately indicates the view that the notion of small model AI, and super fast RAN processing on the GPU need to be proven. And the potentially significant market signal of Nvidia’s investment in Nokia could be read as about making Nokia look more American, and making critical infrastructure more American. (Maybe he read this piece!)

Laurent Leboucher, Orange’s Group Lead Technology Officer, also speaking at the edge of the event says that it’s currently really only radio signal processing that requires microsecond latency. There aren’t many B2B use cases that require lower than a few milliseconds latency, and you can do that from relatively far away. There are very few B2B apps that need that very low latency at the edge. Even a smart factory latency budget is measured in milliseconds: optical latency from Paris to Marseilles in 5 milliseconds.

“If the edge is Paris, then that’s OK for me,” Leboucher says, indicating that for now, he is yet to buy into the AI-RAN model.

The relevance of research

With both the technology and the business case models throwing up so many questions, AI-RAN is a prime example of the benefits of having a strong internal research capability that is allied to the commercial requirements of the business, as well as strongly aligned with external partners. That is the structure that Orange hopes and believes will give it a lead in the future.